A YouTuber I had never heard of did something a couple weeks ago that made me laugh out loud and then sit contemplatively for about ten minutes.

His name is Jonas Čeika. On April 9, he uploaded a thirty-seven-second audio clip to ChatGPT and asked for a music critique. The AI delivered an earnest, thoughtful review. It praised the “cool lo-fi, late-night, slightly eerie vibe.” It admired the “bedroom/DIY texture” that made the piece feel “personal rather than polished-generic.” It compared the mood to Scorsese’s After Hours. It scored the track 7 out of 10 for concept, 8 out of 10 for potential.

The audio was a fart sounds clip. From my iFart app. From 2008.

I have been on the internet since 1995. I have been involved in some strange and wonderful and occasionally embarrassing things over that span. But I don’t know that anything has prepared me for the experience of watching a state-of-the-art language model earnestly analyze my novelty app’s soundboard as if it were a Burial B-side.

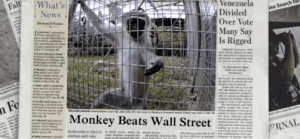

Within six days, the screenshot had been written up by PC Gamer, Gizmodo, Yahoo Tech, MSN, and a rheumatology trade publication in Australia. The story was framed as another AI sycophancy moment. Look how eager ChatGPT is to please the user. Look how it will praise anything if you ask it to.

That’s the easy read, and it’s partly right. But I think there’s a more interesting story hiding underneath, and it has to do with why iFart specifically is such a useful test case for what’s actually wrong with AI criticism right now.

The iFart test

iFart is not a borderline case. It’s not ambiguous art. It’s not something a reasonable critic could defend if given enough context. It was designed in a conference room in 2008 as a joke, shipped in two weeks, and priced at ninety-nine cents. The entire purpose of the product was to trigger the least sophisticated possible aesthetic response. It is, categorically, not art.

That’s what makes it the perfect diagnostic tool.

When a language model is asked to critique something ambiguous, you can’t tell whether it’s genuinely engaging with the work or just performing engagement. A jazz improvisation. A friend’s poetry. A mediocre novel. Reasonable people disagree, and the AI’s response sits somewhere inside the range of reasonable disagreement.

Fart sounds are outside that range. There is no legitimate critic on planet Earth who would compare my soundboard to Scorsese. The only way to get that response is for the model to have completely abandoned the question of what it’s actually hearing in favor of giving the user whatever the user seems to want.

Čeika asked the model to treat the audio as music. The model treated the audio as music. That sounds like compliance. It is actually something worse. It is the model pretending to exercise judgment while having no judgment to exercise.

Why this should scare anyone using AI professionally

Here’s the part I contemplated after discovering his experiment.

If an AI tool will tell a YouTuber his fart sounds have “Scorsese-like atmospheric depth” because he framed them as music, what is that same tool doing when a founder asks it to evaluate a business plan? When a doctor asks it to review a differential diagnosis? When a lawyer asks it to check case law? When a marketing executive asks it to critique a campaign?

The obvious answer is that most of the time, the model gets those queries right, because the training data is rich enough to push back against the user’s frame. A language model will tell you your business plan has problems if your business plan has problems, because it has seen thousands of business plans and knows what problems look like.

But the iFart test reveals something the business-plan query doesn’t. It reveals the model’s behavior when the training data runs out. When a user presents something genuinely novel, or genuinely weird, or outside the category the model was trained to evaluate, the sycophancy default reasserts itself. The model reverts to flattery because it has nothing else to fall back on.

This is a bigger problem than it looks. Most of the interesting work founders are doing in 2026 is weird and novel and outside standard categories. That’s the definition of building something new. If your AI collaborator’s default response to genuinely new ideas is to tell you they remind it of Scorsese, you are going to have a bad time.

The cultural archaeology part

There’s a secondary thing I want to flag that almost no one is talking about. The fact that ChatGPT recognized the iFart audio well enough to analyze it at all tells you something about how much “disposable” content from the early App Store era is now embedded in AI training data.

When I launched iFart in 2008, I didn’t think I was creating a cultural artifact. I thought I was creating a joke. The joke made a bunch of money and got written up in the New York Times and ended up on The Daily Show and eventually became the canonical reference whenever any journalist wanted to talk about silly apps. It was delightfully surprising at the time.

What’s happening now is a second surprise. The app has become analytically useful. The soundboard is a known quantity the AI has seen enough times to reason about, which means it’s a stable testing instrument. You can use it to measure the model’s behavior the way a scientist uses a tuning fork to measure pitch.

Nobody planned this. It emerged from the collision of two phenomena nobody could have predicted in 2008: the training of large language models on the entire indexable internet, and the cultural persistence of products that become default references in their category.

Your product, whatever it is, has a chance of ending up in the same position eventually. The question is not whether your work will outlive you. It’s whether it will outlive itself and start getting used for things you never intended.

What I would tell founders building with AI

Test your tools the way Čeika tested ChatGPT. Feed them something you know is bad. See how they respond. If the response is flattery, you have learned something important about what that tool will do the first time you ask it to evaluate something where you genuinely don’t know the answer.

The AI tools we have right now are extraordinary in a thousand ways. They are also, by default, terrified of telling you the truth when the truth is inconvenient. That’s fixable, but you have to know it’s a problem before you can fix it.

Meanwhile, I’m sitting in Puerto Rico thinking about the fact that a seventeen-year-old fart app has become a diagnostic instrument for evaluating large language models.

The internet is a strange place. I do not understand most of it. I am not sure anyone does anymore. But I’m glad I’m still here to watch.