There is a particular confidence that comes with spotting something fake.

You can hear it in the comments. The short declarative sentences. The technical vocabulary deployed just specifically enough to sound credible. “No soft blending.” “Zero cohesion of elements.” “High school art 101 work.” “It’s garbage.” These are not casual dismissals. These are people performing expertise, publicly, with certainty.

They were performing it about a real Monet.

The Setup

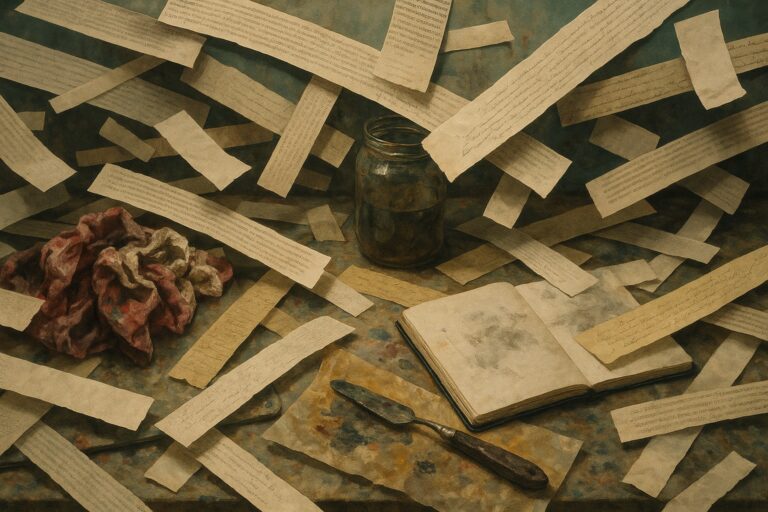

Artist SHL0MS, whose career has been built on experiments that use the internet’s own reflexes against it, posted an image on X. He labeled it AI-generated art in the style of Monet. He did not need to do anything else.

The comments came in waves. Users lined up to name every technical flaw. The brushwork was wrong. The color palette was inconsistent. The composition lacked the visual logic of a true Impressionist master. The pile-on was confident, specific, and almost entirely invented.

Then came the reveal. The painting was a real Monet water lilies piece from the 1800s. One of the most studied, reproduced, and celebrated painters in the history of Western art had just been judged a high school amateur because someone put the wrong label on his work.

Two Responses to Being Wrong

When the truth landed, the thread split in a way that was more revealing than the original mistake.

Some people laughed at themselves and acknowledged the trap. They understood, correctly, that this was the point. SHL0MS had not tricked them to be cruel. He had tricked them to show them something true about how they were seeing.

Others dug in. The Monet was “overrated anyway.” The style “doesn’t hold up to modern standards.” The experiment was “a cheap gotcha.” These are the responses of people for whom being wrong is a larger problem than being wrong. Their position had to survive the new information, even if it meant tying themselves into logical knots to make it happen.

Both camps told us something. But the second camp told us more.

What the Anxiety Actually Sounds Like

The AI discourse has a particular texture. It is loud, and it is certain, and it moves very fast.

Every week, someone posts a viral thread about AI-generated images flooding some creative space, AI text polluting some editorial outlet, AI audio replacing some voice actor, AI something destroying some something. The criticism often sounds analytical. It borrows the vocabulary of craft. Brushwork. Coherence. Authenticity. Voice.

But the SHL0MS experiment pulled the curtain on what a significant portion of that discourse actually is: source anxiety wearing the costume of aesthetic judgment.

People were not detecting real flaws in the Monet. They were performing detection because the label told them they were supposed to detect flaws. The feeling of spotting AI-generated work was real. The actual observation was not.

This matters because the discourse shapes real decisions. Platforms build detection tools around it. Editors make publication policies around it. Audiences form habits around it. When those policies and habits are built on source anxiety rather than actual quality assessment, they work inconsistently and they punish the wrong things.

The Experiment as Art

SHL0MS has done this kind of work before. Forcing the audience to be the subject. Using the internet’s confidence against itself. There is a tradition here: the hidden camera sketch, the performance art reveal, the Borat-style structured impersonation.

What makes this particular experiment work is that it required nothing from the participants except their existing attitudes. No one was lied to about their identity. No one was placed in an artificial situation. They were just given a label, and the label did everything else.

That is a precise observation about how AI anxiety functions right now. It does not require engagement with the actual work. It activates on contact with the source signal.

The word “AI” on a piece of art now carries so much charge that it overwhelms the visual information. The eyes report one thing. The brain, having already received the source data, files a different verdict entirely.

The Honest Reading

The SHL0MS experiment is not an argument that AI-generated art is good, or that concerns about it are groundless, or that everyone criticizing AI work is wrong.

It is an argument about mechanism. It reveals that a meaningful portion of the confident, specific, detailed criticism appearing in AI art discourse is not generated by looking at the work. It is generated by reading the label and then constructing a justification that matches the emotional verdict already reached.

That is a different claim. It is a narrower and more accurate one.

Real concerns about AI in creative industries are worth having carefully. The economic displacement, the consent questions around training data, the way algorithmic optimization can homogenize creative output over time: these are serious subjects. They deserve serious engagement.

What they do not deserve is to be borrowed as cover for criticism that is actually just source anxiety dressed up as craft analysis. The Monet thread is the clearest demonstration in recent memory that the two things are not the same.

The Ones Who Doubled Down

Here is the part that stays with you.

After the reveal, after SHL0MS confirmed the provenance, after the original Monet was identified and linked, a portion of the thread still could not quite let go. The painting was still somehow a little off. Monet’s late style was never really that impressive. The experiment was rigged.

These are the most interesting people in the thread. Not because they were more fooled than the others, but because they illuminate the specific mechanism by which people protect prior judgments when those judgments turn out to be wrong. The verdict came first. The evidence was always going to be interpreted around it.

That is not an internet problem. That is a human problem. The internet just makes it visible, public, and searchable.

SHL0MS gave us a clean record of how confirmation bias sounds when it is running at full speed and has no idea it is being watched.